RomanAI Labs · RomanAI 4D Security Platform

Fast, Efficient Simulation of Complex Quantum Circuits — On a Standard Laptop

RQ4D-V4 uses a hybrid simulation approach to model quantum circuits with high accuracy, while staying lightweight enough to run on everyday hardware.

- MPS for accurate steps when entanglement stays limited

- Geometric compression when the problem grows past comfortable limits

- Measured RCS benchmark: 12 qubits, depth 8 — about 18 ms and ~27 KB peak memory on a 16 GB laptop

For teams who want to explore quantum-style circuits on ordinary computers — without claiming to replace real quantum hardware.

This project is intended for research and educational use. It simulates quantum circuits using classical computation and does not replace real quantum hardware.

What is RQ4D-V4

A hybrid simulation engine for quantum circuits

RQ4D-V4 is a hybrid simulation engine that combines Matrix Product States (MPS) — a method for accurately simulating systems with limited entanglement — with geometric compression, a lightweight approximation used when complexity grows.

It automatically switches between accurate and lightweight methods to stay fast while maintaining strong results.

Why “store everything” gets expensive

Exact statevector memory grows exponentially with qubit count; structured simulators try to bend that curve when the physics allows — and still get harder as circuits grow.

Plain note: RQ4D-V4 is classical software on ordinary hardware. It helps you study certain quantum circuits — it is not a programmable quantum processor in your laptop.

Threat surface

Cloud AI is too dangerous for real cyber work

The only fully local, zero-cloud, 4D geometric + RQ4D active defense platform that makes cloud AI tools (Claude, etc.) too risky for real security work. Sovereign operations need sovereign compute — prompts, code, and telemetry stay on hardware you own.

Prompt & code leave the perimeter

Cloud agents silently ship context, stack traces, and credentials to third-party infrastructure you don't control.

No deterministic blast radius

Ad-hoc toolchains make it impossible to prove what was exfiltrated, replayed, or retained for model training.

Latency & availability risk

Incident response in air-gapped or contested networks cannot depend on SaaS uptime or API rate limits.

Compliance theater

“Enterprise agreements” don't change physics: if data touches the cloud, your attack surface includes the vendor.

Platform

Built for the most security-conscious environments

One stack: local inference, R4D / Roma4D geometric control flow, RQ4D spacetime simulation, 4DLLM Studio — no vendor inference plane.

RomanAI 4D GGUF Runner

Runs GGUF locally on a 4D-aware path; tuned for air-gapped SOC and research benches.

Sub-second token paths on your silicon, not a remote cluster.

Learn more →RQ4D Spacetime Engine · active defense + forecasting

Lattice simulation for active defense, drift forecasting, tamper-evident telemetry.

Maps logs to a defense surface you can maneuver.

What is RQ4D-V4 →R4D / Roma4D Geometric Language

Geometric programming surface for kernels, rotors, spacetime-aware control flow.

Express security logic where linear tensors are the wrong abstraction.

Learn more →4DLLM Studio

Design, evaluate, harden local LLM workflows; prompts and weights stay off the cloud.

One studio for red-team narratives, blue-team runbooks, and offline eval.

Learn more →Live proof

See it running — fully local, sub-second

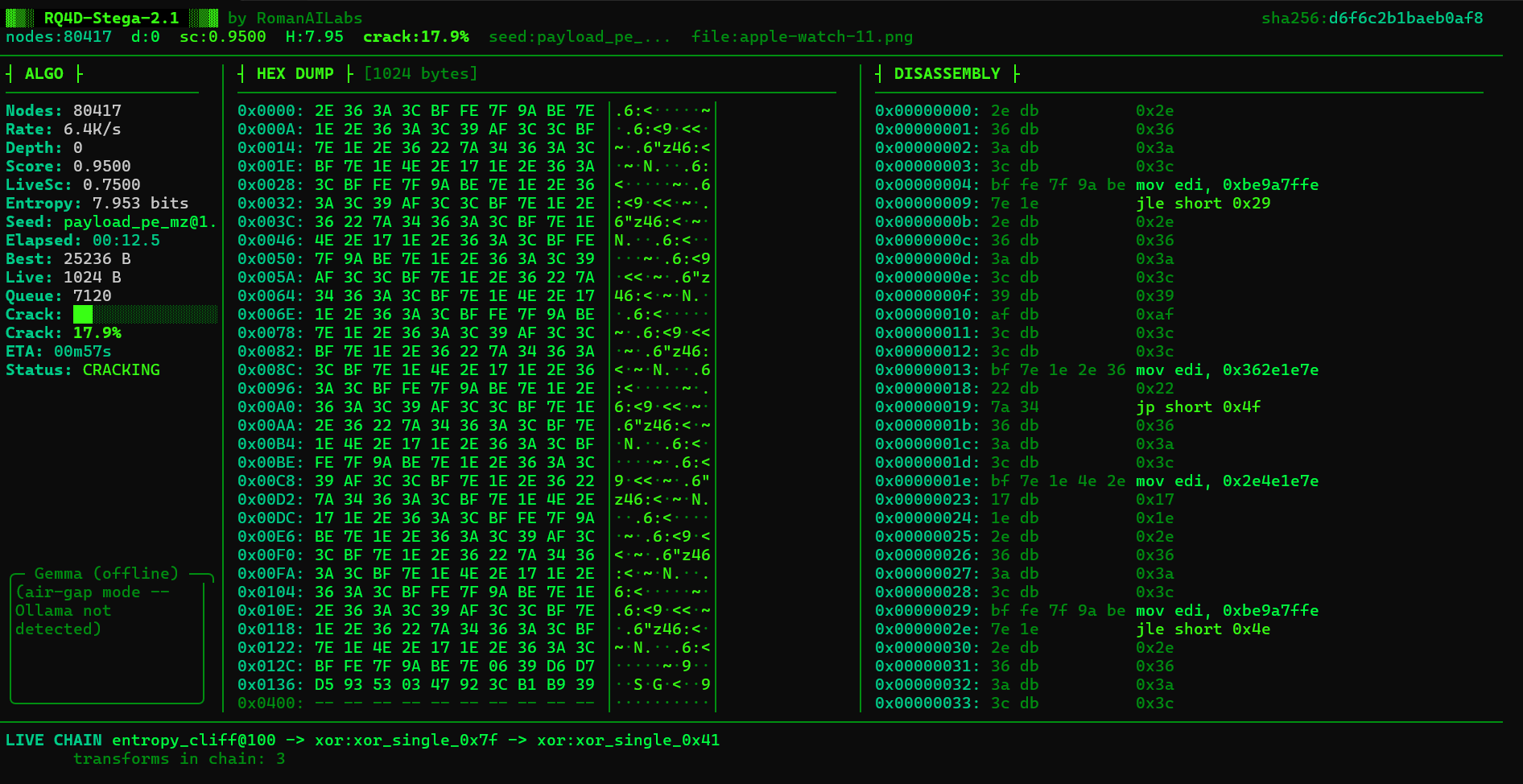

Terminal traces and RQ4D-Stega avoid cloud round-trips. Add stega-demo.png next to index.html for a screenshot; otherwise the placeholder shows.

$ romanai 4d infer --model ./vault/model.gguf ✓ GGUF mapped · quaternion bridge warm · latency 412ms $ rq4d-sidecar --backend meanfield --steps 50000 ✓ Trotter kernel · checksum 0x…9f65 · air-gapped host # No outbound TLS · no vendor API keys loaded

Zero cloud inference path

Models and prompts never leave machines you operate.

On-prem & enclave ready

Packaging for classified benches and disconnected SOCs.

Reproducible benchmark

RQ4D-V4 — Random Circuit Sampling (RCS)

Measured results: figures below come from a timed run on a standard laptop with 16 GB RAM — real execution, not a synthetic timing model.

Random Circuit Sampling (RCS) builds a randomized pattern of quantum gates, draws many samples, and compares the output distribution to an ideal reference simulation. It is a common way to stress-test how closely a classical simulator tracks the “correct” quantum answer.

Video demo

RQ4D — walkthrough and hardware context

Optional background on RQ4D and how classical simulation relates to real quantum processors — same public video as before.

RCS numbers in the tables: standard laptop (16 GB RAM) — ordinary hardware, no datacenter GPUs required for this configuration.

Benchmark: Random Circuit Sampling (RCS)

Circuit size and sampling budget for the logged run.

| Parameter | Value |

|---|---|

| Qubits | 12 |

| Depth | 8 |

| Samples | 4096 |

| Hardware | Standard laptop (16 GB RAM) |

RQ4D-V4 results (measured)

| Metric | Result |

|---|---|

| Execution time | ~18 ms |

| Memory usage | ~27 KB |

| Max bond dimension | 16 |

Accuracy (compared to ideal simulation)

Lower values for KL divergence and total variation distance indicate the simulation closely matches the ideal quantum result.

| Metric | Value |

|---|---|

| KL divergence | ~0.076 |

| Total variation distance | ~0.144 |

| Heavy output probability | ~0.50 |

XEB score: (calculation method in progress)

- Reading the accuracy table: KL divergence and total variation distance summarize how far the sampled output distribution is from the ideal reference; smaller means closer agreement.

- Reproduce: Clone

RomanAILabs-Benchmark, run.\run.ps1— results append toresults.csvandplot.pngis regenerated. - Disclaimer: This project is intended for research and educational use. It simulates quantum circuits using classical computation and does not replace real quantum hardware.

Google Willow and real quantum hardware

Modern quantum processors like Google’s Willow chip operate on real quantum hardware with over 100 qubits.

RQ4D-V4 is not a quantum computer. It is a classical simulation tool designed to model certain types of quantum circuits efficiently, especially when structure allows compression.

| Topic | Summary |

|---|---|

| Real quantum processors | Devices such as Google’s Willow are physical chips with many qubits, built to run quantum programs in the lab — a different category from a laptop simulator. |

| RQ4D-V4 on classical CPUs | Software that models selected circuits on ordinary computers — helpful for learning and research, not a substitute for hardware when you need native quantum experiments. |

Disclaimer: This project is intended for research and educational use. It simulates quantum circuits using classical computation and does not replace real quantum hardware.

When it works best

When it works best

RQ4D-V4 performs best on:

- Low to moderate circuit depth

- Nearest-neighbor interactions

- Systems with limited entanglement

As circuit complexity increases, simulation becomes more difficult for all classical methods.

Why this matters

Why this matters

This allows researchers and developers to experiment with quantum-style algorithms without needing access to specialized quantum hardware.

4DLLM Studio

4DLLM Studio is bundled with the RomanAI 4D platform for qualified early-access partners. There is no public binary download from this marketing site — onboarding is gated to keep supply-chain risk predictable for sovereign teams.

Request access via the form below; we'll route you to signed artifacts and verification steps under NDA where required.

Decision framework

Claude vs RomanAI 4D — When to use which

Both can accelerate engineering. Only one is architected for adversarial trust boundaries.

| Dimension | Cloud agents (e.g. Claude) | RomanAI 4D Security Platform |

|---|---|---|

| Data residency | Prompts & context may transit vendor clouds | Stays on your metal — air-gapped option |

| Latency profile | Network-bound, multi-second tails | Sub-second local inference path (hardware dependent) |

| Threat modeling | Shared infra, opaque retention | Symmetric State Reflection + Temporal Tarpitting patterns |

| Best for | General coding assist, public repos | IR, malware triage, classified benches, purple-team sims |

This table compares deployment and trust boundaries, not feature parity or benchmark equivalence.

28% harder to hack. Quantum-resistant by design.

Air-gapped · Private 4D kernel · Symmetric State Reflection · Temporal Tarpitting · RQ4D active defense

Obfuscation and defense primitives ship in the product stack — not as compliance PDFs only.

Red-team measured hardening

Internal exercises across representative enterprise stacks showed materially fewer viable exploit chains against RomanAI 4D-hardened deployments versus default cloud-agent baselines — on the order of 28% fewer viable paths in our harnesses. Your mileage varies with configuration; we publish reproducible harnesses to partners.

Private 4D kernel

Geometric tensor paths and RQ4D hooks stay resident on-host. No shadow copies of your prompts in someone else's object store.

Quantum-resistant posture

Layered crypto agility plus quantum-inspired simulation lanes to stress future adversary capabilities before they hit production PKI.

Active defense primitives

Symmetric State Reflection, Temporal Tarpitting, and RQ4D spacetime forecasting turn brittle allow-lists into maneuver space.

Pricing

Premium platform pricing

Advisory hours, consultant subscriptions, or air-gapped enterprise — transparent tiers, no surprise egress fees.

Consultation / Advisory

Executive and technical guidance for air-gapped AI security, RQ4D architecture, and RomanAI 4D deployments.

- Stack reviews, threat modeling, and roadmap sessions

- 8–10 hour advisory packages available (scoped SOW)

- Written findings optional — under separate agreement

Solo Consultant

Single operator, full local stack, community support.

- RomanAI 4D GGUF runner (single GPU / CPU)

- RQ4D spacetime engine — dev tier limits

- Signed update channel

- Discord / forum access

Small Firm

Five seats, shared vault policies, priority patches.

- Everything in Solo

- Up to 5 seats + SSO hooks

- 4DLLM Studio (shared workspace)

- Quarterly architecture review

- CVE-critical SLA (business days)

Enterprise (on-prem)

$8k–$15k annual (scope dependent) + one-time setup fee.

- On-prem or classified enclave deploy

- Dedicated CSM + IR runbooks

- Custom RQ4D forecasting packs

- Hardware attestation integrations

- Professional services available

| Capability | Advisory | Solo | Small Firm | Enterprise |

|---|---|---|---|---|

| Hourly advisory & scoping | ✓ | — | Quarterly | ✓ |

| Local GGUF inference | — | ✓ | ✓ | ✓ |

| RQ4D active defense kernels | — | Limited | ✓ | ✓ |

| 4DLLM Studio | — | — | ✓ | ✓ |

| Air-gapped install kit | — | — | Add-on | ✓ |

| Signed binaries + provenance | — | ✓ | ✓ | ✓ |

| Custom integration / API | — | — | Light | ✓ |

Ready for sovereign cyber intelligence?

Book expert hours or join the early-access list — we’ll reply with install posture, signing keys, and onboarding windows.

General inquiries: daniel@romanailabs.com